How to Edit AI-Generated Academic Writing in 2026: 7 Steps to Publish Faster Without Sacrificing Credibility

|

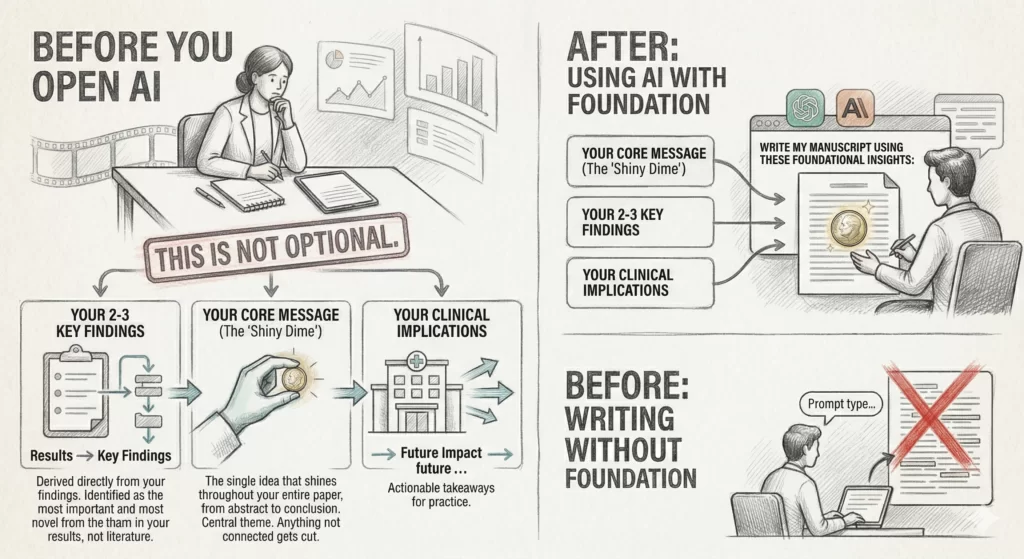

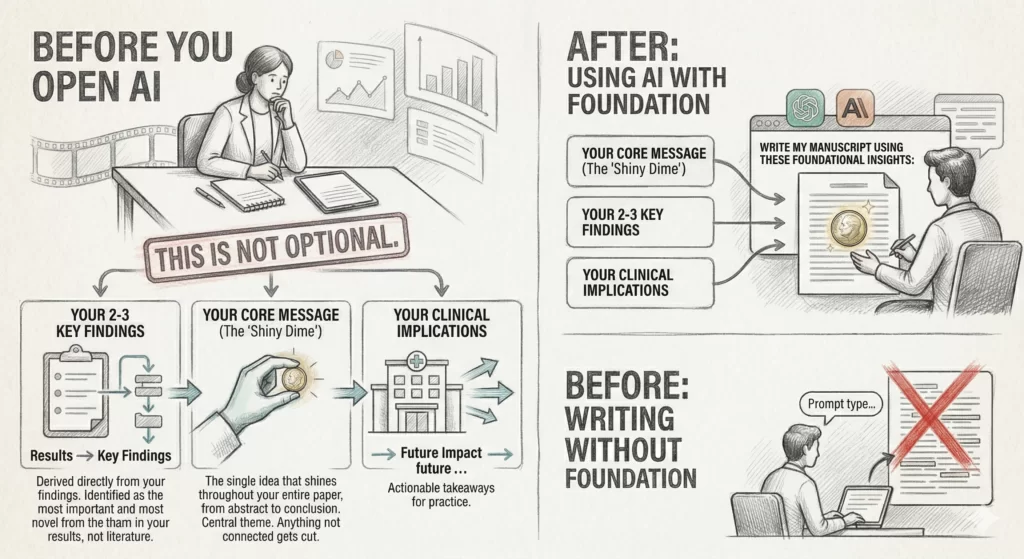

Read at RisingResearcherAcademy.com. Pasting AI output into your paper = sending a trainee’s draft to reviewers without reading it. You would never do that with a trainee. So why are you doing it with ChatGPT? I use AI daily in my research workflow. I have built custom GPTs. I have built an entire AI agent for academic writing (Research Boost). I teach clinical researchers how to use AI responsibly. And I still edit almost everything AI produces. But editing does not begin when the AI finishes generating text. Editing begins before you type a single prompt. The quality of what you get out of AI depends almost entirely on what you put in. And what you put in is not a clever prompt template you copied from Twitter. It is your synthesized thinking. Your distilled expertise. Your core message, your key findings, and the high-impact references that ground those findings in the literature. Get that right, and the AI draft gives you something worth editing. Skip it, and you spend hours polishing a draft that was never headed in the right direction. Here’s my 7-step editing process (Steps 1 and 2 happen upstream, before the AI writes anything. Steps 3 through 7 happen after. All 7 are non-negotiable.): STEP 1: Start With Your Own ThinkingBefore you open ChatGPT or Claude, before you type a prompt, before you do anything with AI, sit down and write three things by hand or in a blank document:

This is not optional. In my manuscript writing framework, the core message is what I call the “shiny dime.” It is the single idea that should shine throughout your entire paper, from abstract to conclusion. Think of it as the central theme of a film. Every scene serves that theme. Anything that does not connect to the core message gets cut. The core message is derived directly from your key findings. Not from the literature. Not from your original research question. From your results. You run the analysis, identify the 2-3 most important and most novel findings, and synthesize those into one overarching takeaway. Here is the order: Results → Key Findings → Core Message Not the other way around. This is what I mean by editing starting upstream. If you feed AI a vague prompt like “write a discussion section about psoriatic arthritis,” you will get a vague discussion section. Generic phrasing. Empty conclusions. Filler paragraphs that sound plausible but say nothing.  The Kusumegi et al. Science study found that researchers who adopted LLMs posted dramatically more papers, but the increased output did not correspond to increased quality as measured by downstream citations and peer review acceptance. More volume, less signal. The antidote is not to avoid AI. It is to bring sharper thinking to the AI before it writes anything. But if you feed the AI your core message, your key findings, and the specific studies that support them, you get a draft that is already 60% of the way to being publishable. That remaining 40% is where your expertise, judgment, and taste come in. And that is what Steps 3-7 are about. Action step: Before your next AI writing session, fill out these 5 key manuscript elements in order:

Get all co-authors to agree on these five elements before anyone writes a word. This prevents the chaotic feedback loop where everyone has a different vision for the paper. STEP 2: Bring Your Key ReferencesThis is the second upstream step that most researchers skip. AI models hallucinate citations. This is not an edge case. It is the norm. A 2024 study in the Journal of Medical Internet Research found that LLMs produced fabricated references in 28.6% to 91.3% of cases when asked to generate citations for systematic reviews. A 2025 study in JMIR Mental Health tested GPT-4o specifically on mental health topics and found fabrication rates ranging from 6% for well-established disorders to 29% for more specialized conditions. And in January 2026, GPTZero’s analysis of over 4,000 papers accepted at NeurIPS 2025, one of the world’s most prestigious AI conferences, uncovered hundreds of hallucinated citations that slipped past multiple peer reviewers.

Use my 3×3 Strategy: For each key idea in your manuscript, identify at least 3 key references that support it. And 3 that refute it. These anchor your understanding of the field before you build out the full reference list. When you give AI your core message along with specific supporting citations, two things happen: First, the AI can ground its writing in real evidence instead of generating plausible-sounding fiction. Second, you dramatically reduce the fact-checking burden on the back end, because you have already done the intellectual work of identifying what matters. While I was building Research Boost, these were exactly the core principles that I followed. It will prompt you to provide these key manuscript elements and find the key high-quality references for you before writing anything. The ICMJE 2025 guidelines now explicitly state that AI tools cannot be credited as authors, that all AI-generated content remains the responsibility of human authors, and that authors must verify facts, references, and interpretations. The 2025 update goes further: it holds authors as the sole stakeholders responsible for providing accurate references and prohibits referencing AI-generated material as a source. Your subject matter expertise is not a nice-to-have. It is the entire reason AI writing works in academic research. Without it, you are just generating text (AI slop). With it, you are accelerating your own thinking. Action step: Before prompting AI, assemble your 3-5 anchor references. Paste the relevant findings and conclusions directly into your prompt alongside your core message and key findings. STEP 3: Fix Structure Before SentencesNow the AI has produced a draft. You have something on the page. The instinct is to start wordsmithing sentences. Resist that instinct. Your first editing pass should be structural. Ask:

This structural discipline is even more important with AI than it was before AI. Research on AI-assisted writing in higher education has found that while AI tools improve grammatical quality and coherence at the sentence level, they often produce text with predictable structures, limited creative nuance, and a tendency toward stylistic convergence that can dilute the depth of academic discourse.

For the Results section specifically, check whether the AI has given you data or results. Data is raw numbers. Results are numbers plus interpretation. Every key finding in your results section should connect the dots for the reader, not leave them staring at a table wondering what it means. For the Discussion section, check the arc. The discussion is the mirror image of the introduction. The introduction moves broad → narrow (problem → gap → objective). The discussion moves narrow → broad (core message → comparison with literature → implications). If the AI has written a discussion that reads like a second literature review, the structure is broken. No amount of sentence-level polishing will fix it. The reverse outline technique: After your first structural pass, breakdown important points in the paragraphs into bullet points. Read only those sentences in order. If the logic does not flow, you have a structural problem. Fix it before touching a single word. STEP 4: Replace Generic Phrases With Specific ClaimsAI defaults to safe, generic language. It loves phrases like: “This has significant implications for clinical practice.” “Several studies have reported similar findings.” “Further research is warranted.” None of these are wrong. They are just empty. They pass the grammar check and fail the “so what?” test. Reviewers trust specifics. They question generalities. This is not a new problem. The most common reason for manuscript rejection has always been poor interpretation of results, not poor grammar (Byrne, Publishing Your Medical Research). But AI amplifies the problem because it is optimized for producing text that sounds authoritative without saying anything specific. A PMC review of AI in scientific writing found that while AI enhances the clarity of texts (particularly for non-native English speakers), its key limitations include technical inaccuracies and excessive standardization of writing style. Replace every generic claim with a specific one:

The specificity principle applies across the entire manuscript. Your core message should be specific (includes the population, direction, and magnitude where possible). Your clinical implications should be specific (not “clinicians should consider this” but “rheumatologists treating PsA patients under 40 with enthesitis should screen earlier”). Your results should be specific (provide the numbers in the text, then reference the table at the end, not the other way around). Common core message mistake: Stating what you set out to do rather than what you found. Readers want your findings, not your intentions. If your core message reads like a research question, it is not a core message yet. STEP 5: Cut RuthlesslyUse these three techniques:

Dr. Joseph Garland (late editor of NEJM) put it well:

The process of revision and rewriting is time-consuming, painful, and necessary. Experienced researchers go through 20 or more drafts before submission. The AI gives you a fast first draft. That is its contribution. Your contribution is the 19 drafts after that. Here is the test for every sentence in your paper: Does this sentence move the argument forward? If no, cut it. AI drafts tend to run long because the model is optimizing for sounding comprehensive. You need to optimize for being clear. Those are different goals. STEP 6: Add Your InterpretationThis is the step that separates a forgettable paper from one that gets cited. And it is the step that AI cannot do for you. AI can summarize. It can organize. It can produce grammatically correct paragraphs that accurately describe what prior studies showed. It cannot synthesize. Synthesis means answering: What does this mean for my patients? Why does this matter now? What should someone do differently based on what I found? Your perspective is what makes the Discussion section the most creative part of your paper. The Discussion has fewer rigid rules than other sections. It is supposed to be the interpretive, perspective-driven section. Most researchers struggle here precisely because of this freedom. They fall back on summarizing prior studies instead of providing their own interpretation. Structure helps. For each key finding:

Each paragraph in the discussion should have a clearly defined core message of its own. Topic sentence → supporting evidence → linking sentence. If you cannot state the point of a paragraph in one sentence, the paragraph needs rewriting. The clinical implications are where you close the loop. They must relate back to the broad problem you opened with in the first paragraph of your paper. This creates a satisfying narrative arc. The story that began with the problem is now resolved with implications. Be specific: not “clinicians should consider multimorbidity” but “developing systematic screening protocols for depression and associated comorbidities may improve long-term outcomes in ankylosing spondylitis.” This interpretive layer is the part of your paper that is uniquely yours. No AI can provide it, because no AI has treated your patients, reviewed your data with your clinical lens, or sat in the conferences where the debates in your field play out in real time. You are the writer. AI is your research assistant. The interpretation and judgement is yours. Step 7: Read It Out Loud, Then Fact-Check EverythingTwo final passes. PASS 1: Read it out loud. If you stumble over a sentence, the sentence needs rewriting. Reading aloud catches passive constructions, awkward transitions, and run-on sentences that your eyes skip over on screen. PASS 2: Fact-check everything. AI is confidently wrong on a regular basis. Check numerical results. AI fabricates plausible but false statistics. Check citations. Even the latest models hallucinate author names and journal titles. Click every reference. Verify it exists. Verify it says what the AI claims it says. The NeurIPS findings from January 2026 are a warning: hallucinated citations slipped past multiple expert peer reviewers at the most competitive AI conference in the world. If peer reviewers at NeurIPS missed them, you will miss them too unless you verify each one manually. Check internal consistency. The same sample size, the same terms, the same definitions across all sections. AI drafts are notorious for introducing subtle inconsistencies between the methods, results, and discussion. Check logic. Does the claim follow from the evidence, or did the AI over-interpret? Did it draw causal conclusions from observational data? Did it overstate your findings beyond what the study design supports? A polished paragraph with a wrong number is worse than a rough draft with the right one. Pro tip: Use thinking models (Claude Opus 4.6, GPT 5.4) for your writing tasks. Free plans default to smaller, less capable models. The difference in quality between a frontier model and a free-tier model is substantial for academic writing. The Real LessonEditing AI-generated academic writing is not a post-production task. It is a full-production workflow that starts with your expertise and ends with your judgment. The upstream work matters more than anything you do after the AI generates text. Your core message. Your key findings. Your anchor references. These are the inputs that determine whether the AI draft is worth editing or whether it needs to be thrown out entirely. After the draft exists, your job is to inject your expertise, your unique perspective, and your clinical judgment into every paragraph. Cut what does not serve the core message. Replace generic language with specific claims. Add the interpretation that only you can provide. Ultimately, you are the one who is responsible. Responsible for the accuracy. Responsible for the interpretation. Responsible for every word that goes into the final manuscript. The AI accelerated the process. You own the product. This is what it means to use AI as a research tool and not a research replacement. PROMPT OF THE WEEKProfessional Headshot P.S. If you want a purpose-built tool for the academic writing process (persistent context + literature grounding + structured IMRaD workflow), that’s what we built Research Boost to do. Try it FREE at https://researchboost.com/ The post How to Edit AI-Generated Academic Writing in 2026: 7 Steps to Publish Faster Without Sacrificing Credibility appeared first on Rising Researcher Academy. Best wishes, Paras Paras Karmacharya, MD MS Founder @Rising Researcher Academy |

RISING RESEARCHER ACADEMY