This One Research Mindset Shift That Will Help You Win With AI: From Role-Based Thinking to Workflow-Based Thinking

|

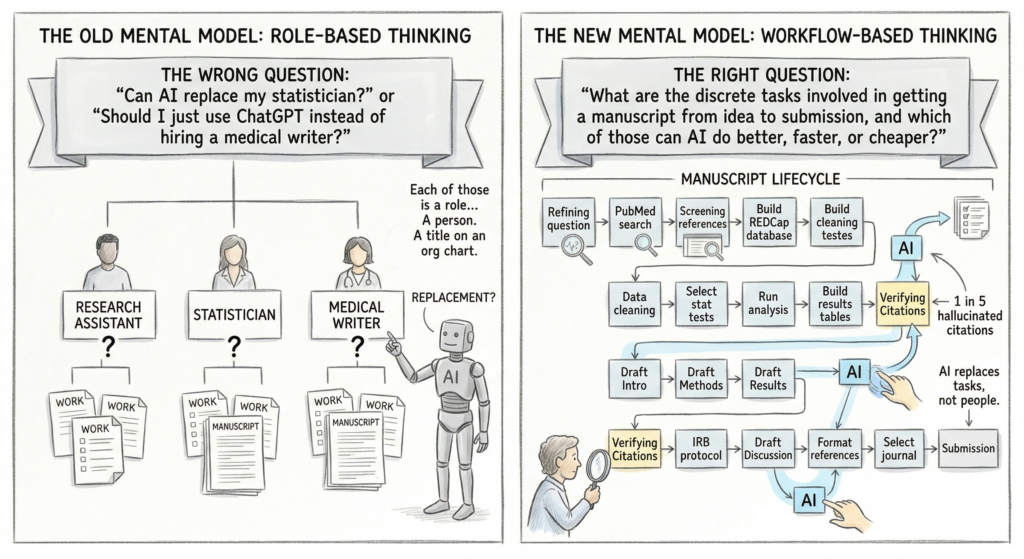

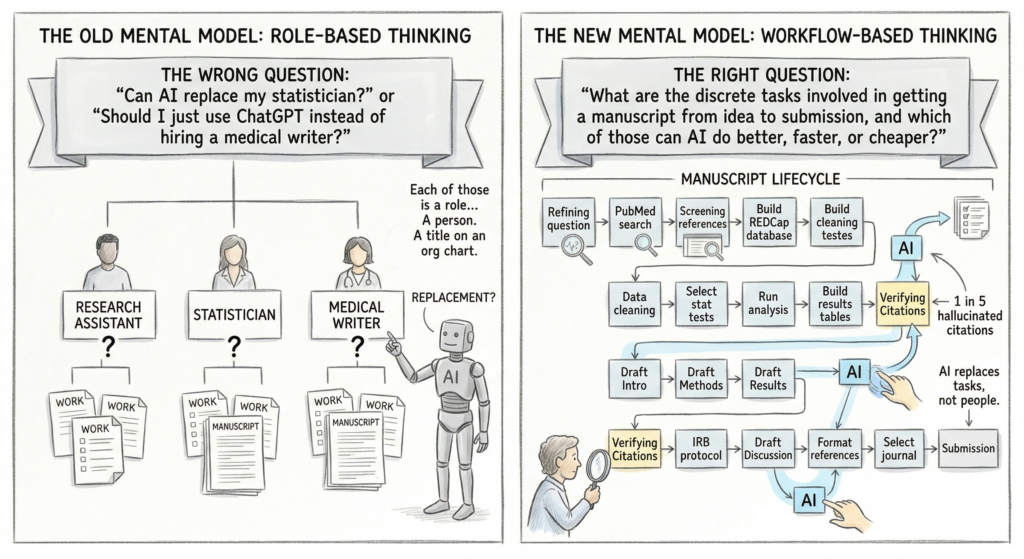

Read at RisingResearcherAcademy.com. Most researchers think about AI the wrong way. They ask: “Can AI replace my statistician?” or “Should I just use ChatGPT instead of hiring a medical writer?” Wrong questions. Both of them. The right question: What are the 15 discrete tasks involved in getting a manuscript from idea to submission, and which of those tasks can AI do better, faster, or cheaper than I can do them manually? That is the shift from role-based thinking to workflow-based thinking. And it will determine who publishes efficiently over the next five years and who keeps grinding through the same slow process with diminishing returns. Let me explain. 1. The Old Mental Model Is No Longer ApplicableHere is how most research teams think about getting a paper published: “I need a research assistant to help with data. I need a statistician for the analysis. I need a medical writer (or my own late nights) for the manuscript.” Each of those is a role. A person. A title on an org chart or a line item on a grant budget. But underneath each role sits a stack of individual tasks. The statistician does not do one thing. They clean data, check assumptions, select models, run analyses, build tables, interpret output, and write a methods section. That is 7 tasks, minimum. The research assistant does not do one thing either. They screen articles, extract data, manage REDCap, coordinate IRB amendments, format references, and chase co-authors for edits.

This distinction matters because AI does not replace people. It replaces tasks. McKinsey’s latest analysis frames AI’s impact in terms of “technical automation potential” rather than jobs lost, finding that most roles will change rather than disappear, with tasks redistributed between humans and AI systems. MIT researchers David Autor and Neil Thompson reached a similar conclusion: automation both replaces experts and augments expertise, depending on whether the simple or complex tasks within a role are the ones being automated. The same principle applies in research. AI will not replace the clinical researcher. But it will reshape which tasks require your full attention and which ones it can draft, format, or accelerate for you.  Figure. The shift from role-based to workflow-based thinking identifies specific tasks AI can accelerate rather than viewing it as a replacement for entire professional roles. 2. Why This Matters Right NowThe pace is real. A study published in Science analyzing over 2 million preprints found that researchers who adopted AI writing tools posted up to 50% more papers afterward, with the largest gains among non-native English speakers. Output is increasing. But so is noise. That same study found something concerning: many of the AI-polished papers failed to deliver real scientific value, creating a growing gap between polished writing and meaningful results. More papers is not the goal. Better papers, faster, is. And the way to get there is not to hand your manuscript to a chatbot and hope for the best. It is to identify which specific tasks in your workflow AI can genuinely accelerate and which ones still demand your expertise. Harvard Business School researchers found that AI could help professionals stretch beyond their usual roles, but the technology hits a clear wall when people lack sufficient domain expertise. Professionals with relevant skills used AI effectively. Those without the foundational knowledge lagged behind their skilled colleagues by 13% when researchers analyzed their output for clarity and competence, even with the same AI tools. The takeaway for researchers: your training in study design, statistical reasoning, and clinical interpretation is not made obsolete by AI. It is what makes AI useful. 3. Map Your Workflow, Not Your Org ChartHere is an exercise I challenge every researcher to try this week. Write down every task involved in taking a study from idea to published manuscript. Not the roles. The tasks. Get granular. Here is what that list might look like:

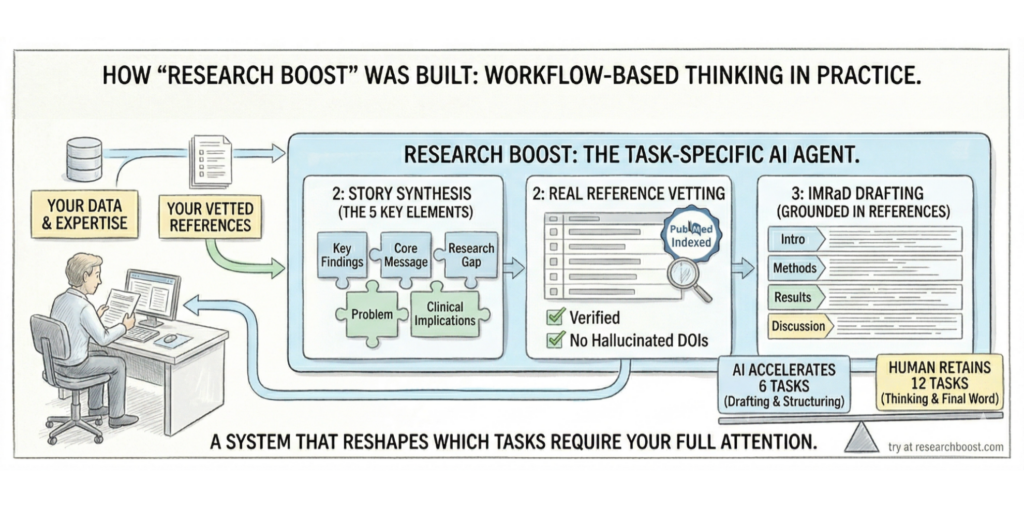

That is 18 tasks. Some of them take minutes. Some take weeks. Now ask yourself: Which of these can AI meaningfully accelerate? Not all of them. Not equally. But several of them, significantly. AI can draft an IRB protocol if you provide the study details. It can generate a first-pass literature search if you give it the right question. It can write a methods section if you feed it your analysis plan. It can format references. It can build a structured abstract from a completed manuscript. But notice: every single one of those requires your input. Your research question. Your data. Your judgment about what matters. AI is a tool that operates on tasks. Not a colleague who fills a role. 4. But There Is a Catch (and It Is a Big One)One of the most tempting use cases for AI in research is generating references. It is also one of the most dangerous. A Deakin University study found that ChatGPT (GPT-4o) fabricated roughly one in five academic citations, with more than half of all generated citations (56%) being either fake or containing errors. That is not a minor glitch. That is a credibility-ending problem if unverified citations make it into your manuscript. A Nature analysis published this week estimates that at least tens of thousands of 2025 publications likely include invalid references generated by AI. At NeurIPS 2025, the top machine learning conference, GPTZero identified hallucinated citations in at least 53 accepted papers that survived peer review and were published in the final conference proceedings. This is exactly why workflow-based thinking matters more than role-based thinking. If you think “AI is my reference finder” (a role), you will blindly trust its output. If you think “AI can accelerate the first pass of my literature search, but I verify every citation against PubMed before it enters my manuscript” (a workflow), you maintain quality control. The task-level lens forces you to define quality standards for each step. That is what protects you. 5. How I Built Research Boost Using This Exact ThinkingWhen I built Research Boost, I did not start by asking “Can AI write a paper?” That question is too big, too vague, and the answer is no (not responsibly, anyway). I started by mapping my own manuscript writing workflow. Task by task. Then I asked: Where do I spend the most time? Where do errors creep in? Where does the process stall? Three bottlenecks stood out:

So I built an AI agent around those specific workflow steps. Not a generic chatbot. A system designed to do three things well. First, it helps you find real, PubMed-indexed references using a hybrid approach (semantic AI search combined with traditional keyword search). No invented citations. No hallucinated DOIs. Second, it helps you synthesize your study details and references into the 5 key story elements that drive every section of the manuscript: key findings, core message, research gap, problem, clinical implications. Third, it drafts each section of the manuscript following the IMRaD (Introduction, Methods, Results, and Discussion) structure, grounded in the references you have already vetted. Every step requires your expertise. Your data goes in. Your judgment stays in the loop. The AI handles the drafting and structuring. You handle the thinking and the final word. That is workflow-based thinking in practice. Not “AI writes my paper.” But “AI accelerates 6 of the 18 tasks in my writing workflow, and I stay in control of the other 12.” (You can try and see it in action here FREE: https://researchboost.com/) 6. The Researcher’s Advantage Most People Don’t SeeHere is the part that should encourage you. An article in MIT Sloan Management Review framed it this way: when AI tools provide many of the answers, the value of experts shifts from content knowledge to context. Their ability to ask better questions, recognize gray areas, and make judgment calls that algorithms cannot is what distinguishes useful AI use from dangerous AI use. Clinical researchers have been training for this their entire careers. You know what a well-constructed Table 1 looks like. You know the difference between a methods section that a reviewer will accept and one that will get your paper desk-rejected. You know when a discussion section is speculating beyond the data versus interpreting within it. A Dallas Fed analysis of AI’s labor market effects found that AI tends to complement jobs demanding experiential, tacit knowledge while automating those built on codifiable, textbook knowledge. Your clinical reasoning, your ability to interpret findings in the context of real patients and existing literature, the judgment calls that come from years of reading, writing, and reviewing papers: that is tacit knowledge. AI cannot replicate it. After the launch of ChatGPT, employer demand for jobs requiring analytical and creative work grew 20%, while postings for structured, repetitive roles fell 13%. The premium is shifting toward people who can think, not just execute. That domain expertise is your competitive advantage. Not your ability to write a clever prompt. Your ability to define “done” for each task in your research workflow, evaluate whether the AI met that standard, and correct course when it did not. 7. Your Action Plan for This WeekHere is what I want you to do: → Block 30 minutes this week. Write down every task involved in your current research project, from where you are now to submission. → Pick the task where you lose the most time. (For most people, this is literature searching or first-draft writing.) → Try using AI for just that one task. Give it your specific inputs: your research question, your study design, your key findings. See what comes back. → Evaluate the output the way you would evaluate a junior trainee’s work. Where is it right? Where is it off? What needs correction? → Repeat with the next task. You are not trying to automate your job. You are trying to build a workflow where AI handles the high-volume, structured tasks while you focus on the judgment calls that only you can make. That is how you publish faster without cutting corners. That is how you get your nights and weekends back. And that is how you stay in control of your work in an era when AI is changing the rules. The researchers who figure this out first will have a compounding advantage over everyone who keeps thinking in roles instead of workflows. You have years of clinical and research training telling you what good research looks like. Use it. PROMPT OF THE WEEKTravel planner: Conference season is coming up. Use the below prompt to plan your next trip. You can add more context to get more tailored answers. For example. “I am traveling with my wife and three kids aged 3 and 5 years of age”. P.S. If you want a purpose-built tool specifically for the academic writing workflow (your critical insights + literature grounding + structured IMRaD workflow), that’s what we built Research Boost to do. Try it FREE at https://researchboost.com/ The post This One Research Mindset Shift That Will Help You Win With AI: From Role-Based Thinking to Workflow-Based Thinking appeared first on Rising Researcher Academy. Best wishes, Paras Paras Karmacharya, MD MS Founder @Rising Researcher Academy |

RISING RESEARCHER ACADEMY